#CITRIX XENAPP 6.5 HEALTH CHECK SCRIPT MANUAL#

It seems that there is no step by step PVS 6.1 and XenApp 6.5 manual anywhere online. VM's in need of migration could then be powered down and attached to this network and then reattached to the correct corresponding network. This was setup on all hosts in both the new and old clusters. Since dvSwitches in the same datacenter cannot share port group names I setup a standard Vswitch with no uplinks and one virtual machine network referenced as Migrate. This was needed to help the machines move from cluster to cluster. Here is my setup I settled on:Īll port groups route based on physical nic load So I began to work creating a second cluster in the datacenter. I was graciously granted three hosts pulled out of the existing 28 host cluster to play with. So this is where you would expect things to end. Jumping from host to host yielded no issues. We changed all the IP Addresses to correspond to the containing VLAN.

I set the only healthy dvUplink to be the only one contained in the port group. To circumvent our issues I started out with a clean VLAN containing nothing. Who does this? Also the dev and test networks all had four dvUplinks. Not only was this the case, but the joker who did the original setup had only allocated 1 active and 1 standby NIC to the production storage and production network group. In an effort to troubleshoot I drilled down even deeper. Wow really? So only dvUplink group 4 was 100% healthy.

Upon further inspection of the VMWare dvSwitch it became apparent that dvUplinks 1-3 had issues with some of the hosts actually having disconnected physical NICs. When machines are on the same host on a promiscuous group the networking stays internal to the hypervisor. It was only then that I noticed that things only booted correctly when the VM's for the XenApp boxes were on certain hosts and even then we only had 100% success when they shared a host with the PVS server. The sporadic hit and miss performance was really puzzling.

It was only until the next day when the symptoms started recurring. So we trudged forward with VM version 7 and things seemed to be just fine. Miraculously things seemed to work after this. The first was bumping the machine level on VSphere down to 7. I traversed many avenues on why the PXE boot screen kept insisting that for TFTP the ARP entry was not found. So the troubleshooting on number 3 almost drove me insane. You might want to consider a separate task for manually replication that runs a pop up at the end to tell the user of success. One thing worthy of note is that it is a totally SILENT operation. The best part about using rich copy from the task scheduler is that not only can you set the job to run every hour, but you can also write a log file and publish it to easily be called from an icon. Scoff all you want but I was able to own 14% of a 10GB/e nic and successfully replicate a 40GB Vdisk around 4-5 minutes from scratch. (They are Vdisks) So I settled on a single threaded job with a 4096K buffer. That said the multi-threaded copy DID NOT work with any stability for these large files. To sync said disks I wrote a rich copy job using the task scheduler and the "others" tab under advanced to get the switches after setting all options. The datastore was the only volume on an aggregate composed of 23 physical spindles. This is where the SATA disks really showed their true colors. This is somewhat of a physical operation on the NAS. To circumvent the performance issues here the VMDK's were manually inflated from inside the datastore. With NFS as the storage all VMDK's are thin provisioned and it is the ONLY option. The local disks in question were actually VMDK's to each PVS box. While old scripts that are not actively maintained do not get new features, they do get bug fixes.This was the best option in this case with only NFS NAS stores available.

#CITRIX XENAPP 6.5 HEALTH CHECK SCRIPT WINDOWS#

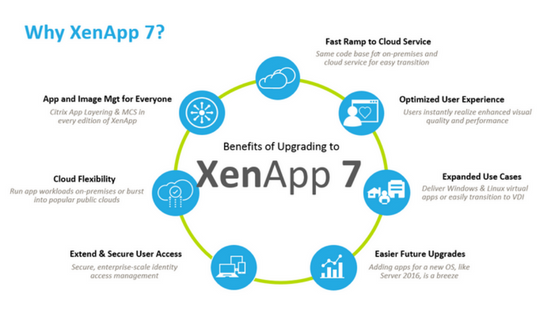

I have Enough Storage to create a Small Farm with 2 VDI, a Storefront and and Master Image, i will start with a Windows 2008 with Xenapp, a Windows 2012 with XenDesktop, the Storefront and Later i will add a Netscaler Virtual ApplianceĪ feature that has been requested in all the XenApp and Xendesktop documentation are scripts with the ability to Document a Xenapp 6.5 Infrastructure. To Start documenting my Lab, I’m Running XenXerver 6.0, on a Dell R710 with 96Gb of RAM and 3TB Disk Array. Since I needed to start my Homelab with XenServer to create Health Checking Scripts for Citrix XenApp and XenDesktops for a Customer health check, i was looking for the best Scripts to start, and nothing better than Carl Webster’s help